Security

Trust in Security Is Not a Promise. It's a Proof.

TLDR: Businesses are feeding sensitive data into LLMs and trusting providers not to misuse it, leak it, or fail to safeguard it even when the intent is good. That is assertion-based trust. Cryptography solved this class of problem decades ago. FHE solves it for AI. Data stays encrypted end to end, even during computation. The provider never holds plaintext. "We don't train on your data" stops being a claim and becomes an architectural impossibility.

Trust in Security Is Not a Promise. It's a Proof.

In most of the world, trust means taking someone's word. You read the terms of service, a privacy policy, and a compliance certification, and you decide whether to believe it. This is assertion-based trust. And it is the dominant model in enterprise security today.

This is not a criticism of the people writing those policies. Providers face a genuine problem: proving a negative is hard. Ask anyone who has sat through a GDPR audit. The documentation is thorough, the intent is genuine, and at the end of it, you are still reading someone's attestation of what their systems do. Still someone's word.

Cryptography solved a version of this problem decades ago.

In crypto, trust is not a claim. It is a verification. You don't trust a server because it introduced itself politely. You trust it because its certificate chains to a root you've already decided to trust. You don't trust a signed binary because the vendor said it's safe. You trust it because the signature verifies against a known key. The math checks. No one's word is required.

There is a principle in cryptography called Kerckhoffs's principle: a system should be secure even if everything about it, except the key, is public knowledge. The algorithms (AES, RSA, SHA) are open. Published. Attacked by the best minds in the field for decades. Their strength comes from surviving that scrutiny, not from being hidden. A secret algorithm is not a feature. It is a red flag.

This is the standard we should be holding trust in broadly.

Today, the gap between assertion and verification is becoming dangerous. Breaches are disclosed months after the fact. Fines follow. Data is already gone. Meanwhile, AI is accelerating vulnerability discovery, and the window between a flaw existing and being exploited is shrinking. The old model of trust (disclose later, remediate, pay the fine) does not hold in this environment.

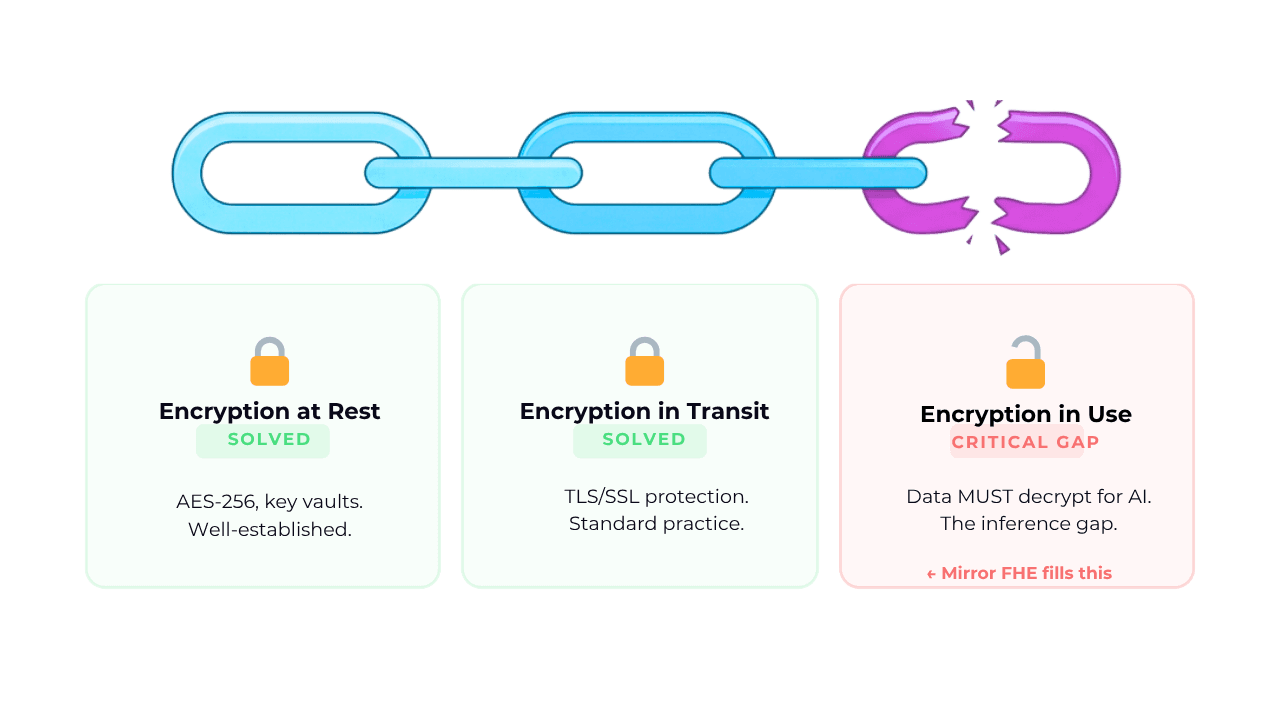

Encryption has done heavy lifting here. It is how we do anything on the web today. It solved the transport problem. It ensured confidentiality in transit. But it did not solve the trust problem because to do anything useful with encrypted data, you have to decrypt it. The moment you decrypt, you are back in assertion territory. "We processed your data securely." Prove it.

This is where Fully Homomorphic Encryption changes the equation.

FHE allows computation directly on encrypted data. The model, the service, the agent, never see plaintext. They receive ciphertext, operate on ciphertext, and return ciphertext. The LLM does not know what it received or what it sent.

This loops back to where we started. "We don't train on your data." With FHE, the provider never held plaintext. The keys stay with the customer. Training on that data isn't a promise the provider is keeping. It's a capability they never had. That is verifiable trust. Not policy. Proof.

And this matters beyond a single privacy claim. As agents execute tasks, make decisions, and touch sensitive data at scale, the surface area of assertion-based trust expands with them. Every agent interaction is a new point where someone's word is standing in for a proof. FHE addresses this at the structural level. The data stays protected not because the provider committed to it, but because the system was never built with access in the first place.

The only durable answer is to make data structurally inaccessible, not policy-protected. Providers who build toward this aren't just ahead on compliance. They're building systems that can prove their own trustworthiness. Customers who demand it aren't being unreasonable. They're applying a standard that cryptography established decades ago.

Crypto built this model. It works. The question is whether we apply it.

At Mirror Security, we make FHE work at the scale and speed that enterprise AI demands.